Shoppable images promise quick wins but plateau fast — not because of design, but because of the operational burden underneath. Here's what ecommerce teams should know in 2026.

QUICK ANSWER — Shoppable images deliver early conversion gains but plateau because of manual tagging overhead, catalog sync drift, and weak attribution across placements. Static interactivity cannot replicate the product storytelling and real-time engagement that video provides. Ecommerce teams get better long-term ROI by pairing shoppable images with shoppable video for high-intent product discovery.

Table of Contents

- How shoppable images Actually Work (and Where the Complexity Hides)

- The Tagging and Catalog Sync Problem That Scales Badly

- Why Static Interactivity Caps Out on Conversion

- Where shoppable images Still Earn Their Place

- When Shoppable Video Works Better Than Shoppable Images

- Measuring Shoppable Content Without Placement-Level Attribution

- Frequently Asked Questions

Shoppable images are static images with clickable hotspots or product overlays that let shoppers move directly from inspiration to product discovery or purchase. Nearly 70% of ecommerce teams that deploy them report measurable conversion gains in the first quarter, according to Forrester's 2025 digital experience survey — but fewer than 20% sustain that lift past month six. The gap isn't a creative problem or a UX problem. It's an operational one: manual product tagging that doesn't scale, catalog data that drifts out of sync within days, and analytics that can't tell you which placement on which page actually drove the sale. This article maps exactly where shoppable images earn their keep, where they stall, and what the smarter content investment looks like for ecommerce teams in 2026.

How shoppable images Actually Work (and Where the Complexity Hides)

At the surface level, the concept is simple. A product image gets layered with interactive hotspots — small clickable markers tied to specific SKUs. A shopper taps a hotspot, sees a product card with pricing and a quick-add button, and can move toward checkout without leaving the page. The format collapses the distance between inspiration and purchase, which is why early adoption numbers look strong.

Beneath that surface, the mechanics get complicated quickly. Every hotspot requires a manual association between a pixel coordinate on the image and a live SKU in your product catalog. That association is static. If the image gets cropped for mobile, the hotspot coordinates shift. If the SKU goes out of stock or the price changes, the hotspot either shows stale data or breaks entirely. Multiply this by hundreds of images across PDPs, category pages, editorial lookbooks, and homepage banners, and you have a maintenance problem that grows linearly with your catalog.

Most platforms that offer shoppable image functionality treat the image as a standalone asset. The tagging happens inside a visual editor, separate from your PIM or product feed. That separation is where complexity hides. Your merchandising team tags an image on Monday. By Wednesday, three of the five tagged products have new pricing. By Friday, one is discontinued. Nobody re-opens the editor to update the hotspots because nobody has a workflow that flags the drift.

Ray-Ban's experience with PDP performance illustrates how much friction matters at this layer. According to Google's web.dev case study, Ray-Ban achieved a +101% conversion-rate lift on mobile PDPs simply by reducing the perceived load time between product listing and detail pages through prerendering. The takeaway for shoppable image teams: any interactive overlay that adds latency or stale data to the product experience erodes the very conversion gains it was designed to create.

The real cost isn't the technology. It's the ongoing human labor to keep every tagged image accurate, current, and correctly rendered across breakpoints.

The Tagging and Catalog Sync Problem That Scales Badly

A brand with 200 SKUs and 30 shoppable images can manage tagging manually. A brand with 5,000 SKUs and 400 images across seasonal campaigns cannot. The math breaks down because tagging is a point-in-time activity, but catalogs are living systems.

Consider what happens during a typical product lifecycle. A new colorway launches. A price promotion runs for 72 hours. A size goes out of stock. A product gets pulled from a regional market. Each of these events should update every shoppable image where that product appears. In practice, most teams discover the mismatch only when a customer clicks a hotspot and lands on an out-of-stock PDP — or worse, sees a price that doesn't match what they were just shown.

Some platforms offer automated product feed connections that sync pricing and inventory in real time. But the coordinate-level tagging — which product appears where on which image — still requires a human decision. You can automate the data behind the hotspot. You cannot easily automate the placement of the hotspot itself, because placement depends on visual composition, not data structure.

For teams running 50+ campaigns per year across multiple markets, this creates a hidden staffing cost. One major European fashion retailer told us their visual merchandising team spends roughly 12 hours per week maintaining shoppable image tags — time that doesn't show up in any technology budget but directly competes with higher-value creative work.

The scaling problem gets worse when you add channels. The same image tagged for your homepage hero needs different hotspot positions when it appears in an email, a social ad, or a partner site embed. Each placement is a separate tagging job. Each one drifts independently. The result is a content operation that looks efficient at launch and becomes a drag on the team within two quarters.

Why Static Interactivity Caps Out on Conversion

shoppable images are interactive, but they are not dynamic. A hotspot reveals a product card. The shopper sees a thumbnail, a price, maybe a size selector. Then they decide — add to cart or move on. The entire interaction takes three to five seconds.

That brevity is both the strength and the ceiling. For products where the purchase decision is low-consideration — a lipstick shade, a phone case, a candle — three seconds of context may be enough. For anything that requires fit assessment, material understanding, or styling context, a static overlay cannot do the job. The shopper needs to see the product in motion, on a body, in a room, or explained by someone who knows it.

According to Google's performance research, 20% of videos across the web include the autoplay attribute, and YouTube embeds can block the main thread for more than 1.7 seconds on the median website. This means most brands default to images not because images convert better, but because video has historically been harder to implement without degrading page speed. The choice is driven by technical constraints, not by what shoppers actually prefer.

The conversion ceiling shows up clearly in A/B test data. Teams typically see a 5–15% lift when they first add shoppable hotspots to editorial images. That lift holds for the first few months as the novelty factor drives clicks. Then engagement flattens. Shoppers learn what the hotspots do, and the ones who were going to buy have already bought. The format doesn't create new demand. It captures existing intent slightly faster.

For high-consideration categories — furniture, electronics, fashion — the gap between what a static hotspot can communicate and what a shopper needs to know before buying is too wide. A tagged image of a sofa tells you the price. A video of someone sitting on that sofa, showing the cushion depth, the fabric texture, and the scale against a real room, tells you whether to buy it.

Where shoppable images Still Earn Their Place

None of this means shoppable images are obsolete. They solve a real problem in specific contexts, and dismissing them entirely would be a mistake.

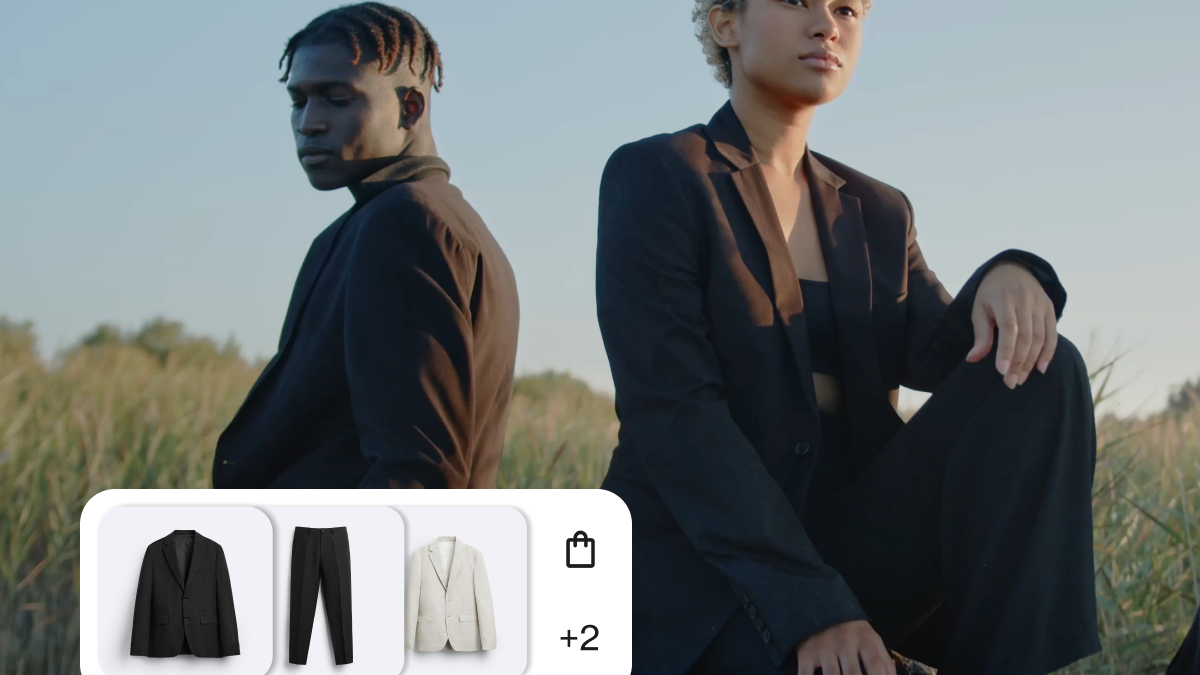

Editorial lookbooks are the clearest use case. A curated lifestyle image featuring multiple products — a styled outfit, a decorated room, a beauty flat-lay — benefits from hotspots because the image itself is the discovery mechanism. The shopper wasn't searching for a specific product. They were browsing for inspiration, and the hotspot converts that inspiration into a product page visit without forcing them to reverse-search each item.

Homepage hero banners and seasonal campaign pages also benefit, particularly when the image features a small, curated selection of products (three to five). The tagging burden stays manageable, the products are high-priority so they get updated first, and the placement is prominent enough to justify the maintenance cost.

Email campaigns represent another strong fit. A single shoppable image in an email can outperform a grid of product thumbnails because it tells a visual story while still enabling direct action. The tagging is a one-time effort per send, and the product set is locked at the moment of deployment — no sync drift to worry about.

Social commerce integrations, where platforms like Instagram and Pinterest already support native product tagging on images, extend the format into channels where shoppers expect it. Here, the platform handles much of the catalog sync, reducing the operational burden on your team.

The pattern is consistent: shoppable images work best when the product set is small, the image is curated, and the tagging is a bounded task rather than an ongoing maintenance obligation. Once you move beyond those boundaries — into large catalogs, dynamic pricing, or high-consideration products — the format starts working against you.

When Shoppable Video Works Better Than Shoppable Images

The limitations of static interactivity point directly to what video adds. A shoppable video doesn't just tag products — it demonstrates them. A host picks up the product, turns it around, explains why it matters, answers questions in real time. The shopper gets context that no hotspot overlay can deliver.

Kappahl, the Scandinavian fashion retailer, saw a 36.54% conversion rate among viewers who interacted with in-show polls during live video events, compared to 7.53% among non-exposed viewers. That five-to-one ratio didn't come from better tagging or smarter hotspot placement. It came from active participation — shoppers who engaged with the content made faster, more confident purchase decisions.

Video also solves the catalog sync problem structurally. Platforms with automated product feed sync update pricing, inventory, and variant availability across all video experiences in real time. When a product goes out of stock during a live show, the overlay updates instantly. No human re-tags anything. The connection between content and catalog is maintained by the system, not by a merchandiser reopening an editor.

Content repurposing changes the economics entirely. A single 30-minute live shopping event can generate dozens of short, tagged video clips — each one a standalone shoppable asset that can be embedded on PDPs, category pages, or campaign landing pages. The tagging happens once, at the source. Every derivative clip inherits the product associations automatically. Compare that to shoppable images, where every new crop or placement requires a new round of manual tagging.

Bambuser data shows that shoppers watching shoppable video are 225% more likely to add items to cart compared to static product pages. That gap reflects the difference between showing a product and explaining it — between a three-second hotspot interaction and a two-minute demonstration that builds confidence.

The operational advantage compounds over time. A team that produces one live show per week and auto-generates clips from each session builds a library of shoppable video content that grows without proportional labor. A team that maintains shoppable images manually faces a maintenance burden that grows in lockstep with their catalog.

Measuring Shoppable Content Without Placement-Level Attribution

The hardest question for any ecommerce team running shoppable content — images or video — is attribution. You know the content exists on the page. You know the page converted. But did the shoppable element cause the conversion, or was the shopper already going to buy?

Most analytics setups attribute the sale to the last click before checkout. If a shopper interacted with a shoppable image hotspot, browsed two more pages, and then added the product to cart from the PDP, the shoppable image gets zero credit. The interaction happened, but the attribution model doesn't capture it.

Placement-level attribution — knowing which specific shoppable element on which specific page influenced the purchase — requires event-level tracking that most image-tagging platforms don't provide. You get aggregate metrics: total hotspot clicks, click-through rate to PDP, maybe add-to-cart events from the overlay. You don't get a view of how the shoppable image contributed to a purchase that completed three pages later.

Video commerce platforms have an advantage here because the interaction is longer and more trackable. A viewer who watches 45 seconds of a shoppable video clip and then clicks a product overlay generates a richer behavioral signal than a viewer who taps a hotspot for two seconds. That signal — watch time, product card views, replay behavior, add-to-cart from within the player — feeds attribution models with more data points per session.

Elkjøp, the Nordic electronics retailer, tracks a 30% conversion rate from video consultation sessions, with an average order value of $470. Those numbers are attributable because the entire purchase journey happens within a single, trackable session. No guessing about which page element drove the sale.

The measurement gap matters because it determines budget allocation. If you can't prove that shoppable images drove incremental revenue — not just clicks, but actual purchases — you can't defend the team hours spent maintaining them. Video, with its deeper interaction data and session-level attribution, gives ecommerce leaders the evidence they need to justify continued investment.

Without placement-level attribution, shoppable content of any kind becomes a cost center that feels productive but can't prove its value. The teams that solve this problem first — by choosing formats that generate attributable signals — are the ones that keep their budgets.

Frequently Asked Questions

How do shoppable images affect page load speed and Core Web Vitals?

Shoppable images can affect performance if the interactive layer loads too early or fetches too much product data on page load. The safest approach is to lazy-load the interactive layer after the image is visible and avoid unnecessary product-data requests until the shopper interacts. This keeps Largest Contentful Paint and Interaction to Next Paint within acceptable thresholds while preserving the interactive experience.

Can shoppable images pull real-time pricing and inventory from my product feed?

Some platforms support automated product feed connections that sync pricing and stock levels in real time, so hotspot overlays always show current data. Others treat the tag as a static association — the price shown is whatever was entered when the image was tagged, and it doesn't update unless someone manually edits it. Before choosing a platform, ask whether it supports live product feed sync via API or data feed (not just CSV upload). Also confirm whether out-of-stock products are automatically hidden or flagged in the overlay, rather than showing a dead link that leads to an unavailable PDP.

What is the difference between shoppable images and shoppable video for product discovery?

shoppable images let shoppers click tagged hotspots on a static photo to view product details and add to cart. The interaction is brief — typically under five seconds — and limited to what a product card can show: thumbnail, price, and a buy button. Shoppable video embeds the same purchase functionality inside a moving, narrated experience. Viewers see products demonstrated, styled, or explained in context, which builds purchase confidence for higher-consideration items. Video also generates richer behavioral data — watch time, replay rate, product card interaction depth — that feeds stronger attribution models. For low-consideration impulse products, shoppable images perform well. For anything requiring fit, texture, scale, or use-case understanding, shoppable video closes the gap between browsing and buying.

Do AI search engines like Google AI Overviews index shoppable image content?

Currently, AI search engines index the underlying page content and structured data, not the interactive hotspot layer itself. If your shoppable image page includes proper product schema markup (Product, Offer, ImageObject), AI systems can reference the products shown. But the hotspot interaction — which product is tagged where on the image — is invisible to crawlers. Shoppable video has an advantage here because platforms can generate VideoObject schema, transcripts, and structured metadata that AI systems parse directly. Brands optimizing for AI-powered discovery in 2026 should ensure every shoppable content page includes complete structured data, regardless of whether the interactive layer itself is crawlable.

-min.png)

.png)